Published by Andriy Burkov, author of The Hundred-Page Machine Learning Book: Link

Published by Andriy Burkov, author of The Hundred-Page Machine Learning Book: Link

Joe Dery published the article “Why Decision Intelligence Is the Future of Analytics – And How It Delivers Real Outcomes“. He offers us the challenge: “Take a problem your team is tackling right now and reframe it through the lens of Decision Intelligence (DI). Map out the decision-making processes tied to that challenge. Where could you enhance decisions? What tasks could be automated? What behaviors could you ethically influence? And most importantly, where would analytical insights make the biggest impact if embedded directly into the process?” He states: “With DI, it’s not just about analyzing data – it’s about building GPS ecosystems for decisions. These systems are dynamic, adaptive, and designed to guide us toward better outcomes, step by step.” Link

This article with this title was published by Stefaan Lambrecht, a frequent DecisionCAMP‘s presenter. “You’re a DMN decision modeler, about to embark on a new project. You’re excited, a little nervous, and ready to face the challenges that lie ahead. But there’s one thing you can’t control – the complexity of the business or business unit you’re about to tackle... Most likely DMN decision models will never form the basis for the logic of self-driving cars, but we can’t deny the fact that business complexity is here to stay, and it’s only going to get more intricate. Let’s face it, we live in a world where the demands on products, services, and compliance with rules and legislation are constantly increasing.” Link

Deepak Menta started this discussion on LinkedIn: “Data is the raw material for Generative AI and Machine Learning. So why isn’t it treated like one in other industries?” “Imagine a future where all we humans do is feed data to AI 😊. At that point, the value depends on who feeds what! Jokes aside, I think there is a need to rethink how value flows back to the creators when their contributions significantly advance AI systems.” Link

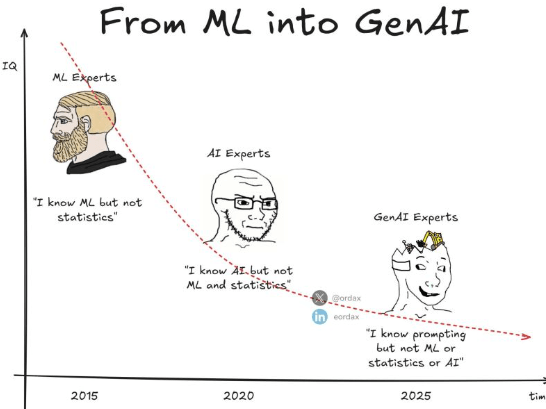

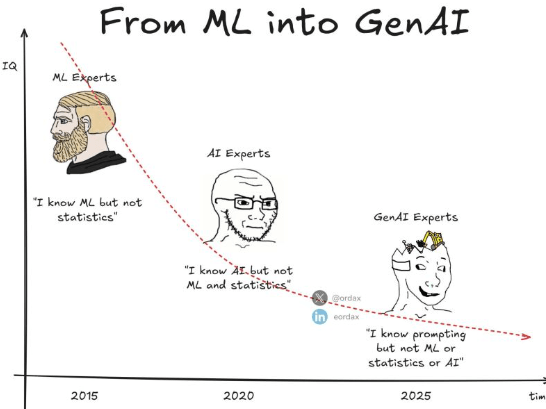

Such a discussion is going on on LinkedIn. There are three concepts that should not be confused.

1. The business problem you aim to solve.

2. The solver you use to tackle your problem.

3. The model you use to tell the solver what the problem is that you need solved.

Doesn’t the model come before the solver? Carlos Armando Zetina highly recommends investing time in selecting the right model/formulation before selecting the right solver. Meinolf Sellmann says: “No! The model is the simplification of the world that you make to support your solver. Therefore, the solver dictates the simplifications you should make. This is not the order that achieves the best results for the business.” What do you think? Link

This article attempts to explain how Large Language Models (LLMs) work, “from scratch — assuming only that you know how to add and multiply two numbers. The article is meant to be fully self-contained. We start by building a simple Generative AI on pen and paper, and then walk through everything we need to have a firm understanding of modern LLMs and the Transformer architecture. The article will strip out all the fancy language and jargon in ML and represent everything simply as they are: numbers. We will still call out what things are called to tether your thoughts when you read jargon-y content.” Link

This month we ask our readers to solve a well-known logic puzzle from Singapore “Cheryl’s Birthday“. The objective is to determine the birthday of a girl named Cheryl using a handful of clues given to her friends Albert and Bernard.

Last month Peter Norvig asked nine LLM chatbots to solve this problem and concluded: “Most of them were familiar with the problem and correctly recalled the answer: July 16. But none of them were able to write a program that finds the solution. They all failed to distinguish the different knowledge states of the different characters over time. At least with respect to this problem, they had no theory of mind.” Can you do better? Link

This challenge is about a decision model that can help a boat rental company purchase different boats while satisfying seating, manufacturers, and limited budget requirements. We received 9 solutions and 3 of them used LLMs. Link

DecisionCAMP-2024 on Sep. 18-20 goes down in history as another successful Decision Intelligence event. It included many interesting presentations, discussions, and an expert panel – you can find all recordings and slides in the Program.

Incorporation of LLMs into Decision Intelligence Platforms was a hot topic at DecisionCAMP-2024 and many vendors started adding AI Assistants to their DI products. However, with conflicting GenAI experiences and predictions, our Community continues to successfully apply Decision Intelligence with advanced rule engines, machine learning, and optimization tools to build practical decisioning systems. Watch the Closing Remarks.

A few days ago at DecisionCAMP-2024 Peter Voss presented “The Third Wave of AI: From rules, to statistics, to cognition” explaining why LLMs are not on the path towards Cognitive AI [AGI]. Today Peter published even a stronger article titled “The Insanity of Huge Language Models” with supporting quotes from Yann LeCun, Demis Hassabis, and even Sam Altman.

Blending LLMs with Decision Intelligence(DI) Platforms was a hot topic at DecisionCAMP and many vendors started adding AI Assistants to their DI products. At the same time the latest OpenAI o1-preview claims reasoning capabilities with which “we’ll no longer need Symbolic AI to make good decisions“. Will such claims be actually justified within real-world decision-making applications? With conflicting experiences and predictions our Community continues to apply Decision Intelligence with rule engines, machine learning, and optimization tools to build practical decisioning systems while cautiously experimenting with LLM-based assistants. Listen the Closing Remarks and share your expectations from DI platforms for 2025-26.

You must be logged in to post a comment.