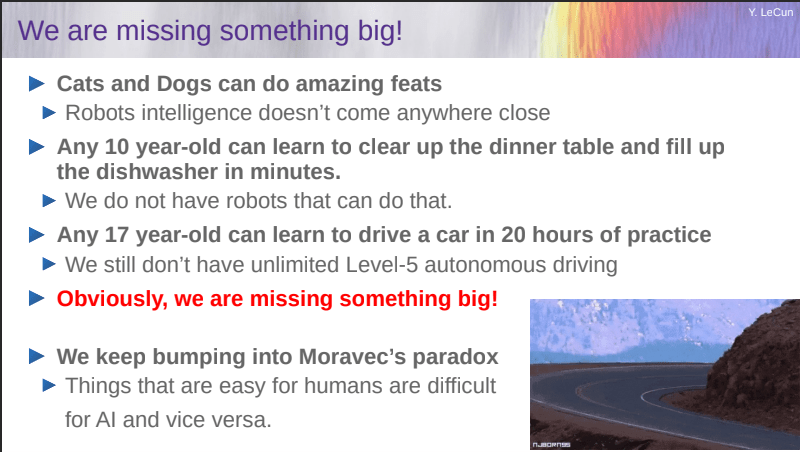

Vincent Lextrait started an interesting discussion at LinkedIn about why “diagram-based engineering”, an approach Low code/No code belongs to, has been tried every 20 years, and always failed. “Just ask Grady Booch who co-invented UML. He’ll tell you that the failure is due to the fact that diagrams are inherently imprecise. Granted they are more precise than natural language, but they still fall short to capture the complexity of business applications. Nobody will try as hard as Grady. It’s just that the industry has forgotten now, it was 20 years ago (the same idea with flow charts 20 years before failed too). Oh you can deliver stuff, but it’ll be simple, not future-proof and you’ll enjoy short happiness. And bad performance. To reach the right level of finesse (and exceed it), you need text-based input: code. This is why math people invented their own language, because natural language was an obstacle to progress.” Link

- Follow Decision Management Community on WordPress.com

-

Sponsors

-

Recent Posts

- LLM-Solve 2026

- AI Mirages

- The Society of Decision Professionals (SDP)

- AI Agents are Going Beyond Chatbots

- Enterprise AI is moving toward Decision Systems

- Bigger Models or Smarter Teams?

- Peter Norvig about LLMs

- New Tools and Skills Shift

- Meaning-Driven Architecture

- Rules as Code 2026 Conference started in The Hague

- From Process-Centric to Decision-Centric Architecture

- From Buzzwords to Decisions

- Decision Modeling by Adam DeJans Jr.

- DecisionCAMP-2026 Expert Panel

Recent Comments

Categories

- Agents

- Algortithms

- API

- Architecture

- Art

- Artificial Intelligence

- Authentication and Access Control

- AWS

- Blockchain

- Books

- BPM

- Business Analytics

- Business Logic

- Business Processes

- Business Rules

- Case Management

- Case Studies

- CEP

- Challenges

- ChatGPT

- CI/CD

- Cloud Platforms

- Computer Scientists

- Constraint Programming

- Containers

- Coronavirus

- Customer Experience

- Customer service

- Data Science

- Database

- Decision Intelligence

- Decision Making

- Decision Modeling

- Decision Models

- Decision Monitoring

- Decision Optimization

- Decision Tracing

- DecisionCAMP

- Defeasible Logic

- DevOps

- Diagramming

- Digital Decisioning

- Digital Transformation

- Discoveries

- DMN

- Education

- Efficiency

- Ethics

- Event-driven

- Events

- Excel

- Expert Systems

- Explanations

- Fairness

- Forecasting

- Fraud Prevention

- Fun

- Games

- Gen AI

- Goal-Oriented

- GPT-4

- HR

- Human Intelligence

- Human-Machine Interaction

- Humor

- Innovation

- Insurance Industry

- Java

- Knowledge Representation

- Languages

- Legal

- LLM

- Logic and AI

- LowCode/NoCode

- Machine Learning

- Manufacturing

- Microservices

- Misc

- MISMO

- Most Influential

- Natural Language Processing

- Open Source

- Optimization

- Orchestration

- PMML

- Process Mining

- Products

- Prolog

- QA

- Quantum Computing

- Reactive Rules

- Reasoning

- Retail

- RPA

- Rule Engines and BRMS

- Rule Violations

- RuleML

- Scheduling and Resource Allocation

- Science

- Scientists

- Security

- Semantic Web

- Serverless

- SLM

- Software Development

- solvers

- Sponsors

- Spreadsheets

- Standards

- State Machines

- Supply Chain

- Testing

- Thinking

- Trends

- Uncategorized

- Uncertainty

- Vendors

- Writing

Archives

- March 2026

- February 2026

- January 2026

- December 2025

- November 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- February 2021

- January 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- January 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- June 2019

- May 2019

- April 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018

- January 2018

- December 2017

- November 2017

- October 2017

- September 2017

- August 2017

- July 2017

- June 2017

- May 2017

- April 2017

- March 2017

- February 2017

- January 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- June 2014

- May 2014

Meta

You must be logged in to post a comment.